[

updated] This post has been updated on 4 July 2015 to reflect the location of stations where trains did not operate as scheduled.

The Washington Metropolitan Area Transit Authority (Metro) was created by an interstate compact in 1967 to plan, develop, build, finance, and operate a balanced regional transportation system in the national capital area. Metro began building its rail system in 1969, acquired four regional bus systems in 1973, and began operating the first phase of Metrorail in 1976. Today, Metrorail serves 91 stations and has 117 miles of track. Metrobus serves the nation's capital 24 hours a day, seven days a week with 1,500 buses. Metrorail and Metrobus serve a population of 5 million within a 1,500-square mile jurisdiction. Metro began its paratransit service, MetroAccess, in 1994; it provides about 2.3 million trips per year.

The Washington Metropolitan Area Transit Authority (WMATA) operates a significant number of trains on hits network every day, and accrues delays and other operational issues over time. WMATA creates a log of all of these delays - the Daily Service Report (DSR) - that affect their trains and cause passenger delays of three minutes or more at a stop. Raw data used for this and other analyses can be found on the WMATA website at:

http://www.wmata.com/rail/service_reports/viewPage_update.cfm.

This post sets out to take the three months of data from

April to June 2015 (Q2 CY2015) and point out trends and other data of interest, especially changes in delay rates over time, delay types, and how these have affected each of the system's six rail lines during the time period of interest.

Delays

Due to the nature of the DSR document, the fact that anything is contained within it means that it had a negative impact on train and/or passenger operations. Each entry in the DSR notes the time of the delay, the line and station, and the cause. Most entries include the delay length, but a percentage of reports do not include this data. For this and all data that does not include an estimate, the delay length has been left blank. This has the possible consequence of not including significant or other delays in the data matrix, however it is not reasonable to fill in the data as this would induce error and potentially-incorrect information.

Data Comments

Due to the format of some data, the classification of delay has been changed in certain circumstances:

- When an incident occurred at a station served by multiple lines, the incident was classified under the line that caused the delay. For instance, if an Orange line train was offloaded due to brake problems at Ballston (affecting the Silver line as well), the incident was classified under the Orange line heading

- Instances of "passenger struck by the train" have been reclassified as a Medical Emergency

- "Track obstruction" has been classified under Track Problem

- "Tresspassers" and "unauthorized persons on the track" have been classified under Police Activity

- Escalator outages causing station trains to skip stations and induce delays have been classified as Operational Problem

- Trains that are noted as "expressed" (meaning they it did not service a particular station or stations) do not have a delay in minutes associated with them, as none is provided by WMATA. There is certainly a delay associated with a train skipping a stop, however it would not be proper to assign a delay to these without further study.

Summary

- Total delay, April 2015: 572 delays, or 3763 minutes

- Total delay, May 2015: 520 delays, or 3519 minutes

- Total delay, June 2015: 698 delays, or 4709 minutes

- Total delay, 2Q CY2015: 1790 delays, or 11191 minutes (avg 7 min/delay)

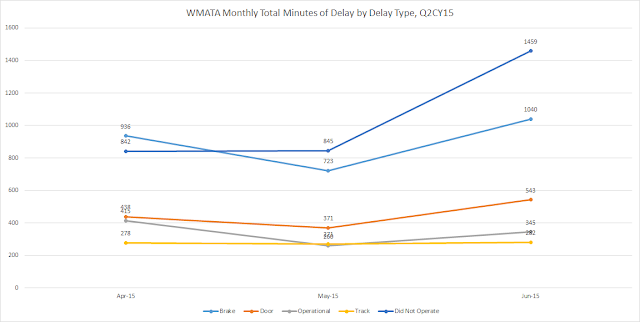

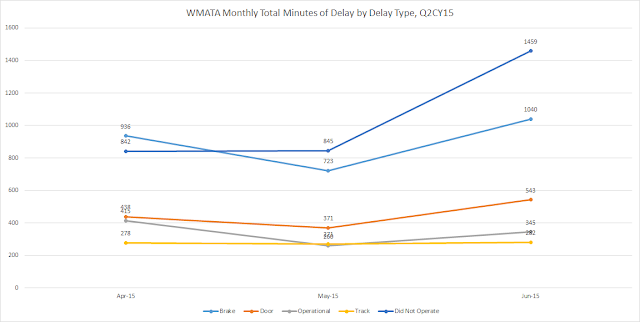

The top five causes of Metrorail train delays caused a total of

9048 minutes of delay throughout the system, or 81% of the total delayed time. The graph below shows the breakdown of the top five types of delay over each of the three months as measured by total minutes of delay.

|

| WMATA Monthly Total Minutes by Type of the Top 5 Causes of Delay for Q2CY15 |

- Track problems caused 831 minutes of delay (13 minutes per delay on average)

- Door problems caused a total of 1352 minutes of delay (8 minutes per delay on average)

- Operational problems caused 1020 minutes of delay (7 minutes per delay on average)

- Trains that did not operate caused 3146 minutes of delay (6 minutes per delay on average)

- Brakes problems caused 2699 minutes of delay (6 minutes per delay on average)

Most Frequent Cause of Delay

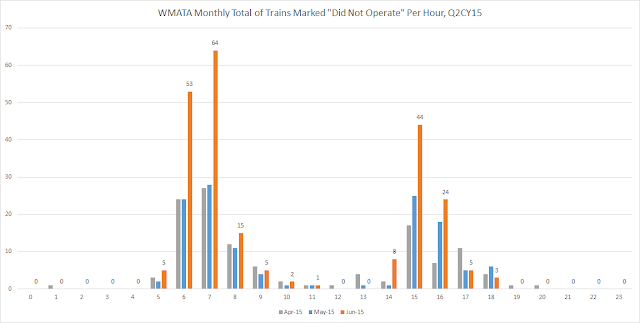

The most frequent causes of delay during the second quarter of 2015 were trains that did not operate as scheduled. This can happen if the rail cars to run the scheduled train are unavailable, if there is no operator, or for other unspecified reasons. For instance, one example is "a Franconia-Springfield-bound Blue Line train at Largo Town Center did not operate, resulting in a 12-minute gap in service." (6/15/2015). Each train that did not operate caused a service gap of approximately

6 minutes on average.

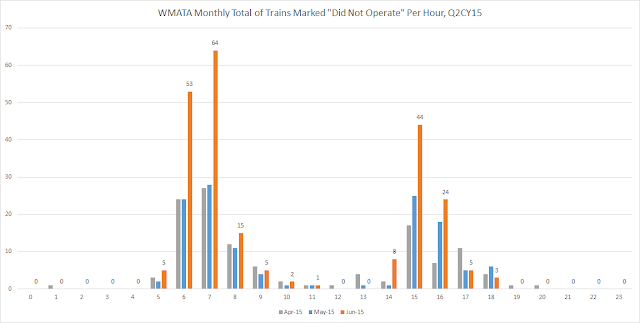

The graph below shows the time of day for each of the three months in the quarter when there were trains that did not operate as scheduled. The number of trains that did not operate rose overall from 124 in April to 128 in May, and then increased to 223 in June. The increase in this type of delay event can likely be partially attributed to all 100 4000-series train cars being pulled from revenue service during the quarter (http://www.wmata.com/rider_tools/metro_service_status/advisories.cfm?AID=4986) over issues with doors opening while moving. Without the 100 4000-series cars to run on the rail system WMATA would have to also decrease the number of 1000-series rail cars in service, since those are not allowed to be either the head-end or rear pair of cars on any train consist. 50 of the 4000-series rail cars have been put back into service as of this writing in early July.

|

| WMATA Monthly Totals of Trains that "Did Not Operate" per Hour (0hr-23hr) for Q2 CY15 |

Diving into the data a little, we cane take a look to see which stations are affected by these trains marked "Did Not Operate." The majority of the increase in these trains is centralized on three stations throughout the system - one on Orange, and two on Green: Vienna, Greenbelt, and Branch Avenue. All three of these stations are termini, and are where the vast majority of Metrorail trains will originate on their trips. For the quarter, these three stations account for 48% of all trains that did not operate.

|

| WMATA Trains Per Station Classified "Did Not Operate" for Q2CY15. Grouped by Metrorail line color. |

The second most-frequent cause of train delay is those that have been expressed past a station. This terminology means that the train does not stop, and instead continues on to the next station on its route. This is usually performed by WMATA "for schedule adherence/improved train spacing" on the line, especially during recovery from an earlier incident causing train

bunching.

|

| WMATA Service Delays by Type per Month for Q2 CY15 |

Most Delayed Line

For all three months in the quarter, the Red line "won" with the most number of delays for a total of

516 over the quarter. Note also that the Red line is the single longest in the system, and thus proportionally it would be expected to have more delays than the rest.

|

| WMATA Service Delays by Line for Q2 CY15 |

Least Delayed Line

The least-delayed line throughout the Metro system during Q2 of 2015 is the Blue Line, with

165 total delays. In addition to having higher headways (thus, fewer trains) than other lines in Metrorail system, the significant portion of the Blue line route is shared with others (Yellow, Silver, and Orange) which all have higher number of delays which could potentially impact Blue line performance as well.

Longest Delay

There were multiple delays (

five) that tied for the longest delay/impact to customers of up to

60 minutes. The first noted delay during the second quarter occurred on Friday April 3rd, 2015 at 8:02am:

A Glenmont-bound Red Line train outside Cleveland Park experienced a brake problem. A Glenmont-bound Red Line train was used to recover the incident train. Both trains were moved to the platform and offloaded. Several trains were single tracked around the incident train and several trains were offloaded and turned back for schedule adherence/improved train spacing. Passengers experienced delays up to 60 minutes.

Of the same length was another delay on Thursday April 16th, beginning at 9:39am:

A Greenbelt-bound Yellow Line train at Fort Totten reported a track problem. Green Line service was suspended between Prince Georges Plaza and Georgia Avenue until approximately 10:30 a.m. Several trains were offloaded and turned back for schedule adherence/improved train spacing. Passengers experienced delays up to 60 minutes.

The next delay of 60 minutes was not until a month later on Thursday May 21st at 3:36pm:

Orange/Silver/Blue line train service was suspended between Eastern Market & Minnesota Ave and Eastern Market & Benning Road due to a power problem. Shuttle bus service was provided. Power was restored at approximately 4:50 p.m. Passengers experienced delays up to 60 minutes.

Monday, June 1st brought the next 60-minute delay at 7:21pm:

A Glenmont-bound Red Line train at Shady Grove was delayed due to a signal problem. Passengers experienced delays up to 60 minutes.

Rounding out the quarter, a 60-minute delay was reported on Monday, June 29th beginning at 5:34pm:

A Shady Grove-bound Red Line train outside Farragut North experienced a brake problem. A Grosvenor-Strathmore-bound Red Line train at Metro Center was offloaded and used to recover the incident train, which was moved to the platform and offloaded. Several trains were single tracked around the incident train and several trains were offloaded and turned back for schedule adherence/improved train spacing. Passengers experienced delays up to 60 minutes.

Notes

While the link to the raw WMATA DSR data is provided above, the processed and analyzed dataset is not included. This can be provided upon request by contacting me @srepetsk on Twitter or at blog [at] srepetsk [dot] net via email.

While I have done my best to ensure the accuracy of the data contained in this post, it is provided as-is with no guarantee that it is correct. Please feel free to contact me you note an error, or suspect there may be an error.

The author of this content does not speak for and is in no way associated with the Washington Metropolitan Area Transit Authority other than being a rider of the system.